Hand-Eye Autonomous Delivery: Learning Humanoid Navigation, Locomotion and Reaching

Stanford University

In 9th Annual Conference on Robot Learning, 2025.

Abstract

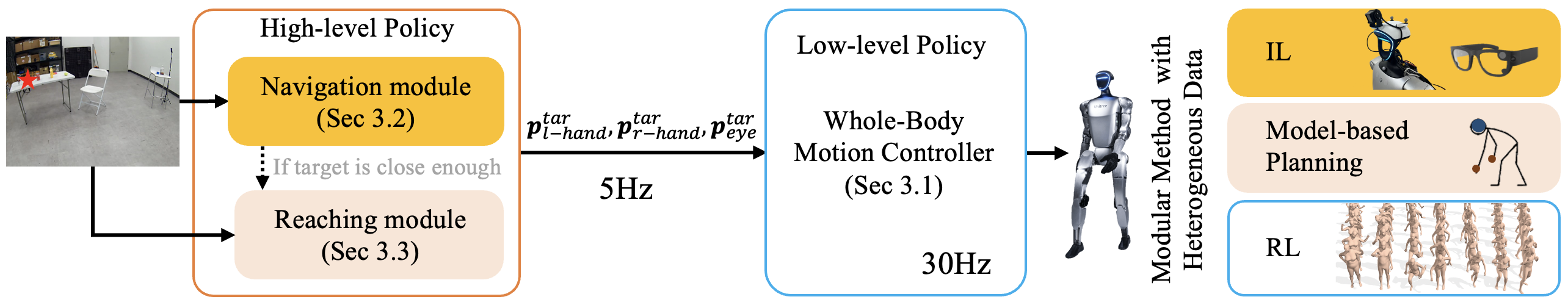

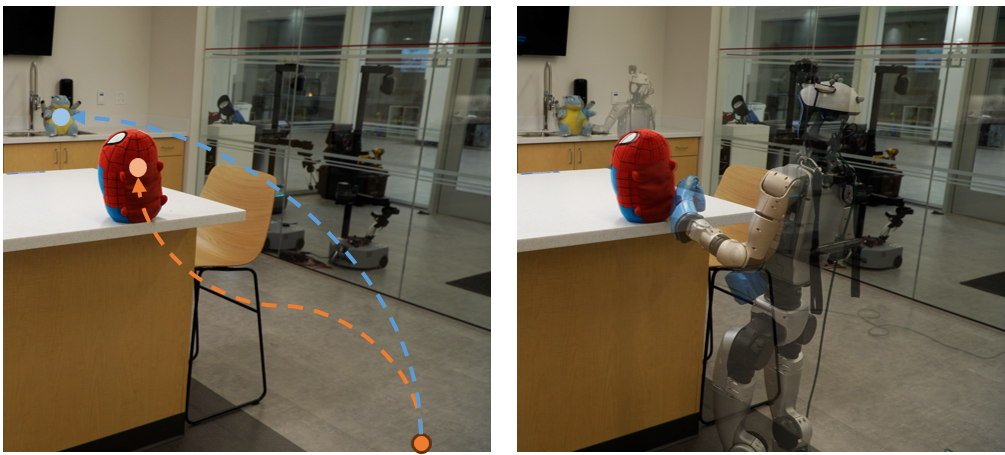

We propose Hand-Eye Autonomous Delivery (HEAD), a framework that learns navigation, locomotion, and reaching skills for humanoids, directly from human motion and vision perception data. We take a modular approach where the high-level planner commands the target position and orientation of the hands and eyes of the humanoid, delivered by the low-level policy that controls the whole-body movements. Specifically, the low-level whole-body controller learns to track the three points (eyes, left hand, and right hand) from existing large-scale human motion capture data while high-level policy learns from human data collected by Aria glasses. Our modular approach decouples the ego-centric vision perception from physical actions, promoting efficient learning and scalability to novel scenes. We evaluate our method both in simulation and in the real-world, demonstrating humanoid’s capabilities to navigate and reach in complex environments designed for humans.

We propose Hand-Eye Autonomous Delivery (HEAD), a framework that learns navigation, locomotion, and reaching skills for humanoids, directly from human motion and vision perception data. We take a modular approach where the high-level planner commands the target position and orientation of the hands and eyes of the humanoid, delivered by the low-level policy that controls the whole-body movements. Specifically, the low-level whole-body controller learns to track the three points (eyes, left hand, and right hand) from existing large-scale human motion capture data while high-level policy learns from human data collected by Aria glasses. Our modular approach decouples the ego-centric vision perception from physical actions, promoting efficient learning and scalability to novel scenes. We evaluate our method both in simulation and in the real-world, demonstrating humanoid’s capabilities to navigate and reach in complex environments designed for humans.

Bibtex

@inproceedings{hand,

title={Hand-Eye Autonomous Delivery: Learning Humanoid Navigation, Locomotion and Reaching},

author={Chen, Sirui and Ye, Yufei and Cao, Zi-ang and Xu, Pei and Lew, Jennifer and Liu, Karen},

booktitle={Conference on Robot Learning},

pages={4058--4073},

year={2025},

organization={PMLR}

}