AdaptNet: Policy Adaptation for Physics-Based Character Control

1 Clemson University, 2 Roblox, 3 McGill University, 4 École de Technologie Supérieure, 5 University of California, Davis, 6 University of Waterloo, 7 University of California, Riverside

In ACM Transactions on Graphics (Proceedings of SIGGRAPH Asia 2023)

Abstract

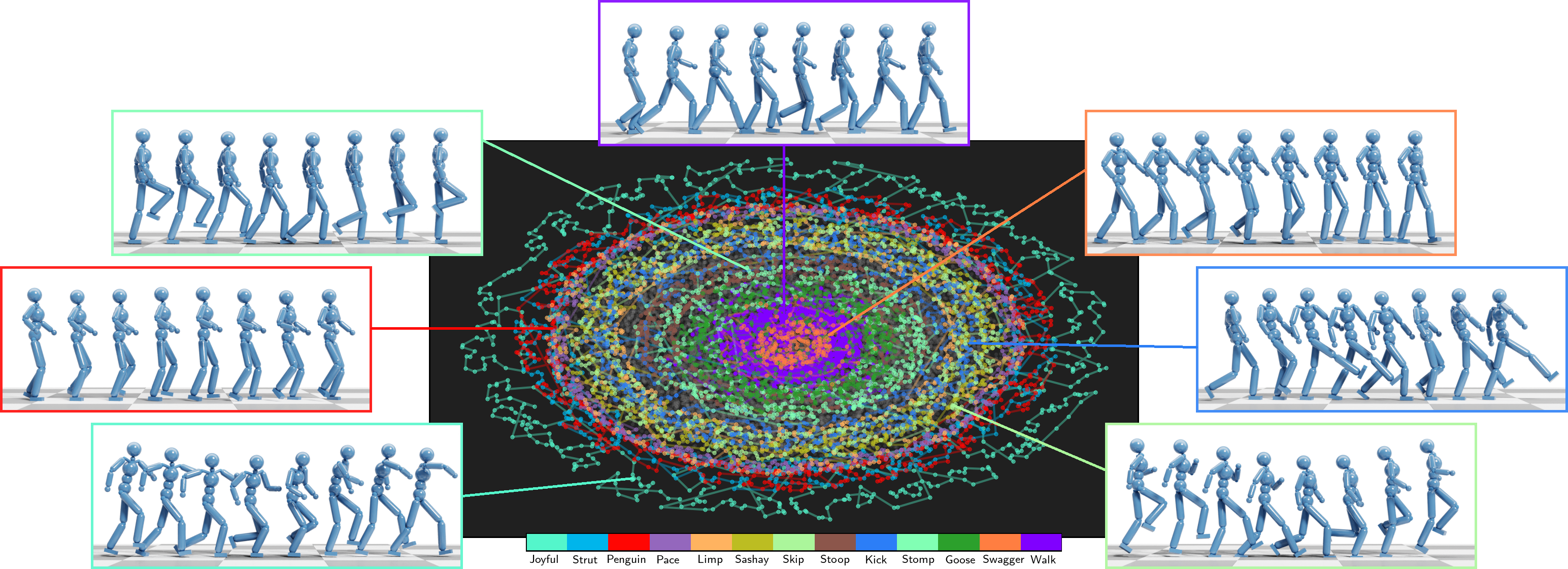

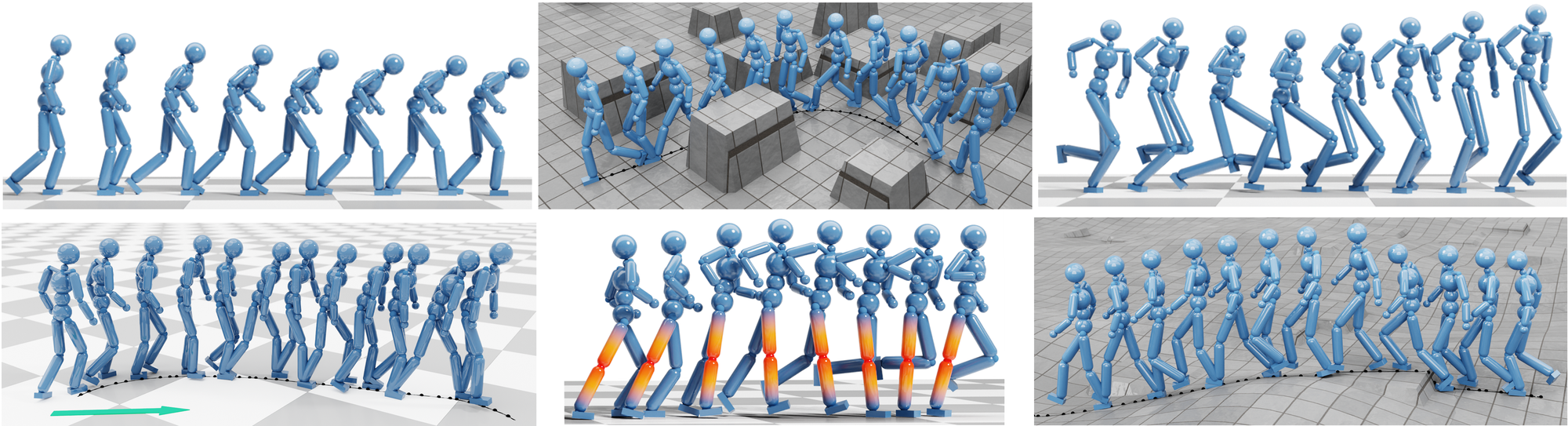

Motivated by humans’ ability to adapt skills in the learning of new ones, this paper presents AdaptNet, an approach for modifying the latent space of existing policies to allow new behaviors to be quickly learned from like tasks in comparison to learning from scratch. Building on top of a given reinforcement learning controller, AdaptNet uses a two-tier hierarchy that augments the original state embedding to support modest changes in a behavior and further modifies the policy network layers to make more substantive changes. The technique is shown to be effective for adapting existing physics-based controllers to a wide range of new styles for locomotion, new task targets, changes in character morphology and extensive changes in environment. Furthermore, it exhibits significant increase in learning efficiency, as indicated by greatly reduced training times when compared to training from scratch or using other approaches that modify existing policies.

Motivated by humans’ ability to adapt skills in the learning of new ones, this paper presents AdaptNet, an approach for modifying the latent space of existing policies to allow new behaviors to be quickly learned from like tasks in comparison to learning from scratch. Building on top of a given reinforcement learning controller, AdaptNet uses a two-tier hierarchy that augments the original state embedding to support modest changes in a behavior and further modifies the policy network layers to make more substantive changes. The technique is shown to be effective for adapting existing physics-based controllers to a wide range of new styles for locomotion, new task targets, changes in character morphology and extensive changes in environment. Furthermore, it exhibits significant increase in learning efficiency, as indicated by greatly reduced training times when compared to training from scratch or using other approaches that modify existing policies.

Video

Bibtex

@article{adaptnet,

author = {Xu, Pei and Xie, Kaixiang and Andrews, Sheldon and Kry, Paul G and Neff, Michael and McGuire, Morgan and Karamouzas, Ioannis and Zordan, Victor},

title = {{AdaptNet}: Policy Adaptation for Physics-Based Character Control},

journal = {ACM Transactions on Graphics},

publisher = {ACM New York, NY, USA},

year = {2023},

volume = {42},

number = {6},

doi = {10.1145/3618375}

}